Read Also

New Hr Capabilities To Face Evolving Technologies

Anti Deisnasari, Director Of Compliance, Seabank Indonesia

Strengthening The Compliance Fortress In The Banking Sector

Chuan Lim Ang, Managing Director And Sg Head Of Compliance, Cimb

Navigating Legal Challenges By Adapting To Technological Shifts

Valerie Feria Amante, Chief Legal, Ethics & Compliance Officer, Jollibee Group Of Companies

Compliance In The Medtech Industry

Tomoko Chantelle Kondo, Head Of Legal & Compliance, Arthrex Japan

How Can The American Trade Finance Companies Manage Present (And Future?) Chinese Mineral Export Control Measures?

Thomas Lagriffoul, Regional Director Of Compliance Apac, Thomas Lagriffoul Coface

Optimizing Customer Experiences Through Data-Driven Strategies

Indra Hidayatullah, Information Technology Operation Division Head, Pt. Bank Tabungan Negara

Customer-Oriented And Compliance Mindsets In Claims Management

Alex Lee Li Haojun, Group Claims Manager – Insurance, Mapletree

Optimizing Business Efficiency with a Multi-Disciplinary Legal Operations Team

Shulin Tay,Head Of Legal And Compliance - Singapore, Revolut

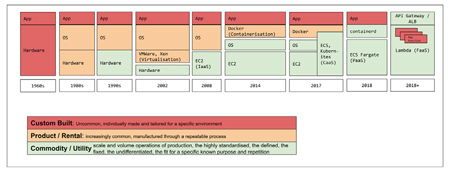

The FaaS model is more secure. It scales automatically. There are no servers to manage. There is unprecedented transparency into costs. It enables new tooling and new workflows with less code and standardised storage services. It allows us to stop needing to worry about what "service" a particular piece of code needs to live in. And it's done in a consistent repeatable way, at scale.

At every progressive step in this chain we've had to upskill people, build new tools and adapt. At every point of disruption, the next step ahead felt uncomfortable.

The reality is that a new startup today will use FaaS. That also means they will launch into production today. They will move faster, they will be more productive. They won't need complicated tooling like Kubernetes or even today’s concept of DevOps.

How does 99designs as a company stay competitive? We adapt and change as the technology evolves. Those who don't make these leaps are eventually left behind.

The FaaS model is more secure. It scales automatically. There are no servers to manage. There is unprecedented transparency into costs. It enables new tooling and new workflows with less code and standardised storage services. It allows us to stop needing to worry about what "service" a particular piece of code needs to live in. And it's done in a consistent repeatable way, at scale.

At every progressive step in this chain we've had to upskill people, build new tools and adapt. At every point of disruption, the next step ahead felt uncomfortable.

The reality is that a new startup today will use FaaS. That also means they will launch into production today. They will move faster, they will be more productive. They won't need complicated tooling like Kubernetes or even today’s concept of DevOps.

How does 99designs as a company stay competitive? We adapt and change as the technology evolves. Those who don't make these leaps are eventually left behind.